Your data warehouse has fourteen columns that contain some version of the word "revenue." Gross revenue, net revenue, total_price, subtotal, total_sales. Each means something different, lives in a different table, refreshed on a different schedule. Making AI answers accurate for eCommerce starts here, at the data layer. When an AI tool queries your warehouse, it picks one of those columns. It does not know which one your finance team reconciles against.

Gartner projects that by 2027, organizations prioritizing semantics in AI-ready data will increase agentic AI accuracy by up to 80%. The variable is the structured business context between the model and the data. For eCommerce brands building toward an AI-ready data foundation, the context layer separates tools that guess from tools that know. This article explains what that layer is, why eCommerce data breaks AI without it, and how to build or buy one.

What a Context Layer Is

A context layer (sometimes called a semantic layer) is the structured body of knowledge between your raw eCommerce data and any AI or analytics tool querying it. It translates technical column names into business definitions, locks in how every metric gets calculated, and tells the AI which tables to join, which filters to apply, and what the result should mean.

Anyone evaluating a context layer for eCommerce AI or a semantic layer for eCommerce analytics is looking at the same concept: infrastructure that forces every downstream tool to produce consistent outputs.

Think of a new analyst joining your team. They have database access, but nobody has briefed them. They do not know that "net revenue" at your company means gross revenue minus returns minus discounts minus tax, not the total_price field in Shopify. They do not know that Amazon orders overlap with Shopify for the same customers and need deduplication. The context layer is that briefing, written into your infrastructure so the AI gets it automatically on every query.

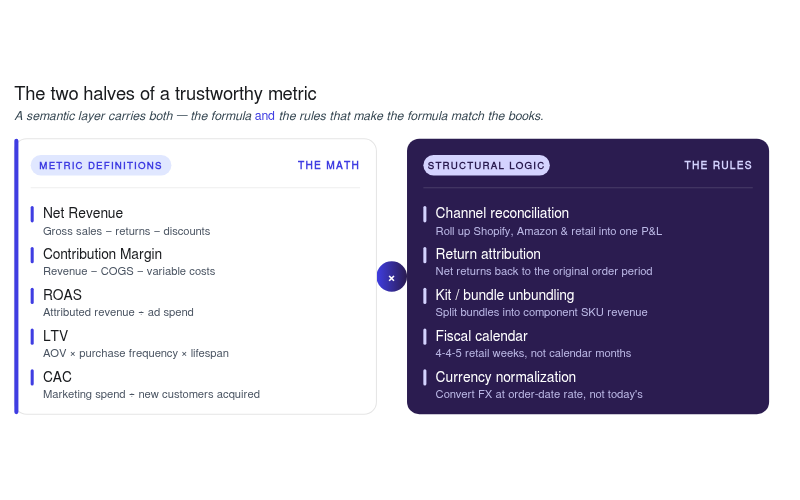

What a context layer defines:

- Metric definitions: The exact calculation logic for net revenue, contribution margin, ROAS, LTV, and CAC as your business uses them

- Dimension definitions: How channels, SKUs, cohorts, and time periods are segmented

- Join logic: Which tables connect to which, under what conditions

- Business rules: Fiscal calendar offsets, return attribution windows, date-effective COGS, marketplace fee structures

Research across 522 enterprise queries found a 38% improvement in AI SQL accuracy when grounded in context-rich metadata, with gains reaching 2.15x on medium-complexity queries where business rules made the difference between a correct answer and a plausible guess.

Why AI Gets eCommerce Data Wrong Without a Context Layer

LLMs generate answers based on patterns, not business rules. When you ask an AI to query your eCommerce data, it does not have your company's definition of contribution margin, ROAS, LTV, or any other business concept. It fills gaps with its best statistical guess. McKinsey research found that over half of organizations have experienced negative outcomes from AI implementations, and the pattern is consistent: the AI lacked the business context to interpret the data correctly.

Over 80% of retailers are already using or piloting generative AI, according to NVIDIA's 2025 State of AI in Retail report. So, is adoption the bottleneck here? No. AI accuracy for eCommerce data is. And eCommerce makes that accuracy problem worse in ways most industries do not.

Multi-channel fragmentation. Shopify, Amazon, wholesale, and retail each record the same transaction differently. Without reconciliation logic, an AI query double-counts or misattributes revenue across channels.

Retroactive mutations. A return processed 60 days after the purchase changes that order's margin retroactively. Raw tables capture the return as a separate event. Only the context layer joins it back to the original transaction.

Metric ambiguity. Most eCommerce warehouses have multiple columns that could answer "what was our revenue?" The AI picks one. It rarely picks the one finance reconciles against.

Business logic absent from training data. Date-effective COGS, 3PL rate card tiers, kit unbundling for subscription boxes. None of this exists in any LLM's training data. It has to be explicitly defined.

Tribal knowledge. Which attribution window does marketing use? How does finance handle pre-orders placed in one month and shipped the next? When those rules live in people's heads instead of in infrastructure, the AI has no way to learn them.

What Bad AI Output Looks Like in Practice

A VP of Growth asks: "What was CAC payback by acquisition cohort for Q1, split by channel?"

The AI queries raw warehouse tables. It calculates acquisition cost using paid-media platform spend only, excluding affiliate commissions stored in an invoicing system, influencer fees tracked in spreadsheets, and a fixed agency retainer booked separately by finance. For payback, it pairs that incomplete CAC with a rolling LTV metric instead of the cohort-based contribution margin definition finance uses.

The output: 4.2 months.

At month-end close, finance reconciles the same cohorts using the governed contribution-margin model and lands closer to 7 months. The discrepancy comes from missing business context around cost attribution and metric definitions.

What an eCommerce Context Layer Looks Like

A properly built eCommerce business context layer defines two categories of knowledge: how metrics are calculated and how data structures connect.

Metric Definitions the Context Layer Must Lock In

Net revenue means gross revenue minus returns, minus discounts, minus tax. Not "total_price." Not "subtotal." The specific formula your finance team reconciles to the P&L. In one of our client engagements, the data team walked through exactly this: gross sales plus shipping minus discounts minus refunds. That formula has to live in the context layer.

Contribution margin means net revenue minus date-effective COGS, minus channel-attributed ad spend, minus fulfillment cost, minus payment fees. Every component must be defined, because "contribution margin" without specifying which costs are included is a number that looks precise but means nothing.

ROAS: Ad spend to revenue ratio, but which revenue and which attribution window? The context layer removes that ambiguity.

LTV and CAC: Is it cohort-based or rolling? Which cost components included? The definitions must be written down and enforced.

Structural Logic the Context Layer Must Encode

Channel reconciliation: How Shopify, Amazon, and wholesale orders are matched and deduplicated at the order grain.

Return attribution: How refunds join back to originating orders and which metrics they adjust retroactively.

Kit unbundling: How a subscription box with 4 SKUs gets split into component-level margin calculations.

Fiscal calendar: Whether the business runs 4-4-5 or standard months, and what that means for period comparisons.

A pre-modelled eCommerce data warehouse handles most of these definitions out of the box, with customization layers for brand-specific rules on top.

The eCommerce Data Journey That Feeds the Context Layer

The context layer sits at the top of a data stack that has to be built from the bottom up. To improve LLM accuracy for eCommerce data, every step in this sequence needs to be complete. Skip one, and the context layer has nothing reliable to govern.

Think of it like constructing a building. Raw data is materials from a dozen suppliers, each labeled differently. The modeling step standardizes everything into common units. The context layer is the blueprint that tells the builder how pieces connect and which codes apply. Without the blueprint, the structure may look right from outside but will not survive an audit.

Step 1: Ingestion. Raw data from every source flows into a central warehouse via an ELT pipeline. For eCommerce, this is harder than it sounds; Amazon Seller Central alone has dozens of overlapping report types, and Amazon retroactively changes data regularly. An eCommerce data pipeline built for these connectors handles ingestion without requiring brands to maintain each API themselves.

Step 2: Modeling. Raw tables are cleaned, joined, and transformed into datasets with business logic applied. Duplicates resolved, timezones reconciled. This is where most in-house stacks stall; eCommerce modeling (kit unbundling, return attribution, date-effective COGS) goes beyond standard dbt patterns.

Step 3: Context layer. Metric definitions, join logic, and business rules are formalized on top of modeled datasets. This is the layer any AI tool queries, making AI answers accurate for eCommerce questions.

Important: Skipping step two and pointing AI directly at raw tables is the most common cause of AI inaccuracy in eCommerce. The context layer cannot fix what the modeling layer never cleaned.

How the Context Layer Connects to AI Tools

The context layer does not talk to AI tools on its own. It gets exposed through a connection mechanism, and the choice determines whether queries are governed or unguarded.

Pattern 1: Direct-to-raw-tables (no context layer). The AI queries whatever it can access. No definitions enforced, no joins validated. Hallucinations are the default outcome.

Pattern 2: BI tool with a semantic model (partial context). Tools like Looker or dbt Semantic Layer define metrics for BI queries but often do not expose those definitions to LLMs in a structured way. The AI may bypass the semantic model depending on how the connection is configured.

Pattern 3: MCP with a governed semantic layer (full context). The AI connects through a purpose-built MCP server for eCommerce, and every query routes through defined metric and join logic. The AI cannot access raw tables directly. This architecture makes AI answers accurate for eCommerce queries consistently, regardless of who asks or how they phrase it.

Watch for this signal: Ask your AI tool the same metric question in two phrasings: "contribution margin last month" and "profit margin last 30 days." If the results differ, the AI is guessing at definitions rather than querying governed ones.

Same Question, Two Different Answers

A CMO at a DTC brand asks their AI analytics tool: "What was our contribution margin by channel last month, and which channel improved most?"

Without a Context Layer

The AI queries raw order tables and sums a revenue column. It uses total_price from Shopify and total_sales from Amazon without reconciling overlapping orders. It subtracts COGS using a standard cost column not updated since Q1. Ad spend is not joined because the AI was never shown the marketing tables. Fulfillment costs are missing entirely.

The result looks like a contribution margin figure but is closer to gross margin, inflated by 15 to 20 percentage points. The AI presents it with a chart and zero caveats.

With a Context Layer

The AI queries the contribution margin metric as defined in the semantic layer: net revenue minus returns, minus date-effective COGS, minus channel-attributed ad spend, minus fulfillment, minus payment fees. Orders are already reconciled at the order grain. Returns are attributed to originating orders.

The AI returns accurate contribution margin by channel, flags the comparison, and surfaces the calculation logic so the CMO can verify.

Same model. Same question. Same warehouse. AI accuracy in eCommerce comes down to this single architectural decision.

As Jason Panzer, President of HexClad, put it: "We go to Saras Pulse and get our daily contribution margin reporting. We get all of our marketing metrics by channel, by category, even down to the SKU. Everything is pulled in automatically."

Building vs. Buying the Context Layer

For eCommerce brands evaluating how to connect LLMs to their data warehouse without hallucinations, the decision comes down to domain expertise, timeline, and maintenance appetite.

The Build Path

A data team can construct a context layer using dbt Semantic Layer, Cube.dev, or LookML. For a mid-market brand with 5 to 10 data sources, this typically takes 6 to 12 weeks from an experienced analytics engineer, plus ongoing maintenance every time the business adds channels or updates cost structures. Generic modeling patterns do not cover eCommerce-specific logic like kit unbundling, 3PL rate card tiers, or Amazon settlement reconciliation.

The Buy Path

Managed platforms compress that timeline from months to days by shipping pre-built metric definitions for common eCommerce questions alongside the structural reconciliation logic generic tools skip. Saras Pulse delivers a pre-modelled eCommerce data warehouse with certified datasets, and the AI eCommerce analyst layer connects through a governed MCP server. Brand-specific rules get layered on top.

The Honest Tradeoff

Building gives full control with significant upfront and ongoing investment. Buying gives faster time to accurate AI analytics for eCommerce with tested logic underneath. The hybrid path is most common for Shopify brands between $20M and $200M: managed foundation for pre-built eCommerce logic, custom layers for brand-specific rules.

Momentous took this approach, moving to an AI-ready data foundation and achieving near-real-time insights on certified datasets. Read the full case study →

Conclusion

AI accuracy in eCommerce is a context problem. The brands getting trustworthy answers today are the ones that invested in the layer between the model and the data: governed definitions, reconciled datasets, and business rules encoded at the infrastructure level.

Talk to the data consultants at Saras Analytics to see what accurate AI analytics looks like when the eCommerce context layer is already built.

.png)

.svg)

%201%20(1).svg)

.png)

.png)

.png)

.png)

.png)

.webp)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

%20(1).avif)

.avif)

%20(1).avif)

%20(1).avif)

.avif)

.avif)